After 30+ years of connected tech, digital security ingredients labels set to arrive globally

You walk into an electronics store in search of a webcam to help keep your family safe and your video private. You arrive at the webcam aisle, faced with box after box of competing products. 4K video. Unlimited cloud storage. Excellent security monitoring plans. Ease of use. Great video streaming performance. Awesome battery life.

What you don’t see anywhere on any box: does this product ensure that video data is private only to you and your family and will never be shared without your consent? Can you protect access to your video data account with multi-factor authentication? What sensors does the product have built in, and what data (other than video) is collected from the device, and how is that data used and shared with third parties? For how long will this product be supported with updates so it remains safe? Has the product’s security and privacy capabilities been evaluated by an independent lab?

There are no answers to these questions on any of the boxes. No web link or QR code where you can learn the answers. So you make a choice based on speeds and feeds, bells and whistles, and price. And you may choose a product that has awful security and privacy. In fact, if you select randomly, you are likely taking home a product whose digital safety capabilities would be disappointing, if you knew what they were (ironic, since you’re buying a safety product).

That is sad, and none of us should accept this state. We deserve better. And it’s not just for webcams. Phones. TVs. Routers. Gaming consoles. Refrigerators. Washing machines. Vacuum robots. Every connected product in the store is lacking the most basic information to help you make a smarter decision about your digital safety.

This is not a new problem. Since the dawn of the digital world decades ago, one of the biggest failures in safety - privacy, counter-abuse, security - has been the lack of transparency around the relative ability of digital products and services to meet safety objectives.

The lack of transparency has become an increasingly significant headwind as our digital connectivity has grown, causing immense inefficiency and poor safety outcomes. But there is, finally, some light at the end of the tunnel, and in the next few years, a digital safety label on connected products should become common, like food ingredients labels, enabling us to make safer purchasing decisions and driving product developers to build better safety into products.

The journey indeed has been a long one, as I share my personal perspective below.

Starting with the hardest problem: high assurance certification

In the decade between 1998 and 2008, I led a team developing an operating system and hypervisor technology whose goal was to provide high confidence in safety for the most critical IoT Things - medical devices, avionics, autonomous vehicles, national security encryptors, etc., and in 2008 we certified the technology to both the highest level of safety evaluated by third party certification authorities as well as the highest level of software security - similar to the level of confidence demanded for the most secure hardware chips such as Google Titan-M2, where the goal is to demonstrate effective deterrence against “high potential” attackers (sometimes characterized by AVA_VAN.5 under the ISO 15408 standard - see Sections 16 and B.4 in this guide for background) and equivalent to well-resourced state-sponsored threats.

The security certification took approximately six years to complete, and the government-funded agencies required five years (!) to finalize the security requirements document. To the best of my knowledge no security flaws were discovered by the evaluators who had access to source code and practically unlimited resources to review the product over several years. The inefficiency and bureaucracy of the process were staggering. It’s no wonder no other software products have been evaluated in the same way since.

Yet the users of the product deserved to have in their hands the results of this evaluation. The transparency around the security ingredients was something they needed to make better decisions about protecting some of the world’s most critical systems. But it had become obvious that government agencies weren’t properly resourced to manage programs that could move at the speed of consumer products.

My book on connected product security, published about a decade ago, dedicated a section, “Independent expert validation”, to this concept of evaluation and transparency for the security ingredients in digital products. But scaling the concept for consumer electronics remained an elusive dream.

The first consumer electronics baseline security transparency program

In 2015 while leading product security at BlackBerry, our team’s responsibilities included supporting security evaluation programs, including FIPS 140-2 and Common Criteria certifications for smartphones. The level of assurance derived from these government-managed certifications was minimal, but because of the regulations and aforementioned bureaucracy, the amount spent to go through the motions was immense, the ROI questionable. This reinforced the realization that industry and NGOs were needed to develop scalable consumer-oriented programs. Serendipitously, I was connected by my brother-in-law, a physician, to the diabetes device community, which had been the target of security research shining a light on poor security practices in connected insulin pumps and the need for better transparency. This led to me writing the DTSec and its recent descendant, IEEE 2621, standards. These described not only baseline security requirements but also how to run an evaluation program offering multiple levels of assurance at reasonable cost. But we didn’t just need a standard, we needed to demonstrate how to run an efficient evaluation and monitoring program. Partnering with Dr. David Klonoff, an endocrinologist with a unique passion for cybersecurity, we brought in a variety of medical professionals, government regulators (including FDA), and device manufacturers, to stand up a successful program to prove that consumer electronics security evaluation could be done efficiently and economically by relying on an industry-led, rather than government-led, scheme. To the best of my knowledge, this was the first program to evaluate baseline security capabilities of consumer electronics, and several diabetes devices were evaluated and certified under the program.

Figuring out how to truly scale the impact

After joining Google in 2017, I sought to apply this concept to all IoT products and created a new scheme, partnering with ARM and UL, initially targeting consumer webcams. But in 2018, we learned about the concurrent work of the Internet of Secure Things Alliance, which was doing essentially the same thing but had more organizations (including the Zigbee standards group) already involved. We decided to combine forces, and I joined the alliance board to continue the development of a broader IoT scheme. We partnered with great folks from Amazon, Meta, T-Mobile, Resideo, Legrand, Comcast, and more. The effort was led by the alliance’s CTO, Brad Ree, who did an amazing job managing a group of competing tech companies. We certified numerous Google products, including Nest smart home products and Pixel phones. We had plans to expand the scheme to include mobile apps and back-end cloud services. Unfortunately, the board members disbanded. But by now, we’d built a great team within Google who not only had the proper security and privacy background but also the experience working on numerous other certification programs to drive consumer product security transparency programs anew. We partnered with GSM Association to work on mobile device security, Connectivity Standards Alliance (CSA, fka Zigbee Alliance) for smart home digital products, and the App Defense Alliance (ADA) for mobile apps and SaaS cloud apps leveraging OWASP. I’ve had the fortune of partnering with my teammate Eugene Liderman, whom I call the magician for how he manages to cut through bureaucracy and bring organizations together, many other great folks within Google, and a ton of like-minded leaders outside of Google who have joined us on this journey. We’ve been hard at work building global schemes that scale to the global IoT, and have started launching them.

The ADA, CSA, and GSMA evaluation and transparency schemes are coming together just in time for a wave of global government efforts to encourage the use of digital security and privacy ingredients labels on IoT products.

So what is the role of the government in this?

Government agencies are not resourced to manage global-scale evaluation and monitoring programs, but they are set up to leverage global NGO schemes - ones that have strong governance and broad industry participation like those we’ve developed at ADA, CSA, and GSMA - within their own national labeling programs. And after talking to many government leaders about this, it seems they are eager to work in a public/private partnership for maximum impact.

Singapore launched the first national IoT security labeling program, and the leadership and resolve of Singapore’s Cybersecurity Security Agency has been globally impactful. When the program was launched, we worked to be the first tech company to certify products against the highest level (Level 4) offered by the Singapore scheme. However, when invited to speak at the Singapore Cyber Conference in 2020, I cautioned that bespoke national labeling schemes were not going to work; rather we needed Singapore and other governments to harmonize their labeling programs by leveraging international NGO schemes, like Connectivity Standards Alliance’s. Thankfully, Singapore’s leaders in this area have agreed and have been working to build cross-recognition of their national label with the Connectivity Standards Alliance.

The UK was also an early leader in IoT standards and regulation. Led by Peter Stephens in the government ministry then called the Department of Culture, Media, and Sport (DCMS) and the leadership and expertise of David Rogers, the UK published a draft standard for IoT baseline security requirements which later became ETSI 303645. Peter also led DCMS’s effort to push for legislation that would require a few of the baseline requirements to be present in all IoT products sold in the UK. I provided written and oral testimony to Parliament, and the law was passed. While the bill is currently focused on a sensible minimum baseline of requirements (roughly: must not use universal default passwords, must publish security lifetime policy, must have a method for receiving vulnerability reports), the main gap in the legislation is that it does not require transparency against a more complete set of baseline requirements. Mandating a large set of requirements may pose an undue burden on smaller businesses whose practices pose a relatively small risk to consumers, but requiring a developer to simply state what they do (or don’t do) provides enhanced transparency to help consumers make better decisions while not imposing product changes.

The United States is also developing a labeling program, and we’ve provided feedback to the various agencies working on it, including participating in strategy sessions and publishing a blog and additional guidance for the US and other national labeling efforts.

Mandatory vs. voluntary labeling regimes

The reason IoT security is often so poor is that product manufacturers lack sufficient economic incentive to do better. While there are many ways to build such incentives, the simple, proven method of driving developer behavior is to ensure that consumers have sufficient awareness of product differences that then drive their purchasing behavior. Developers invest in product features that customers are willing to pay for, and we’ve armed consumers with user reviews, product reviews, and product ingredients labels that give them the information they need to make such decisions. But that transparency is still missing for digital safety capabilities. We know consumers care about privacy and security, but unlike battery life and screen resolution, digital safety is harder to measure, even for basic aspects like whether the device is properly encrypting data or still getting security updates. These can’t be easily perceived by the consumer or evaluated by the typical consumer reviewer. The aforementioned standards and monitoring schemes are addressing this gap, and now we need transparency that enables consumers to see the results!

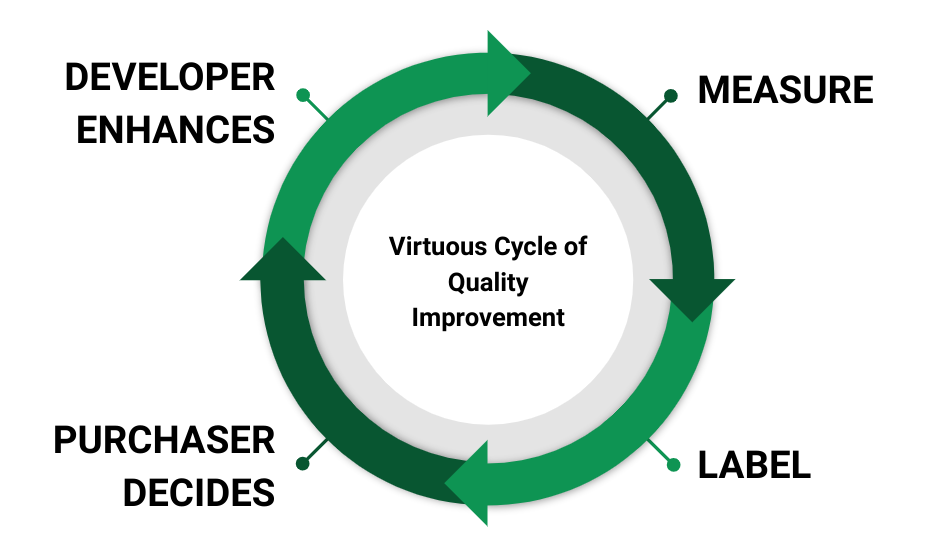

Just like any other aspect of product quality that is measurable and transparent, when consumers see the differences, we get a virtuous cycle of improvement:

This cycle is evident in so many things, from food and drugs to the energy usage in consumer electronics.

The latter provides a lesson regarding whether digital safety labels should be voluntary. The US government introduced voluntary energy use labeling in 1992 and a specific television category in 1998. At time of writing, there are 692 television products that show up in Best Buy via online search, with only 3.5% (24) of them showing up as energy star certified. The voluntary certification has not enabled consumers to broadly compare energy usage across products in similar categories. The good news, however, is that the US government began mandating the EnergyGuide label, enabling simple comparisons of energy cost and usage, for televisions in 2011. If you’ve purchased a flat panel TV in the past decade in the US, there’s a good chance you were comparing energy usage via EnergyGuide labels. EnergyGuide does not force manufacturers to meet some maximum energy consumption level. The program simply requires manufacturers to be transparent about energy usage and cost estimates so that consumers can make informed purchasing decisions. And because we now use this data to make better decisions, manufacturers have a strong incentive to improve energy usage in order to compare favorably. With EnergyGuide, the US government succeeded at encouraging healthy competition that helps consumers through mandatory transparency rather than mandatory requirements or levels of performance.

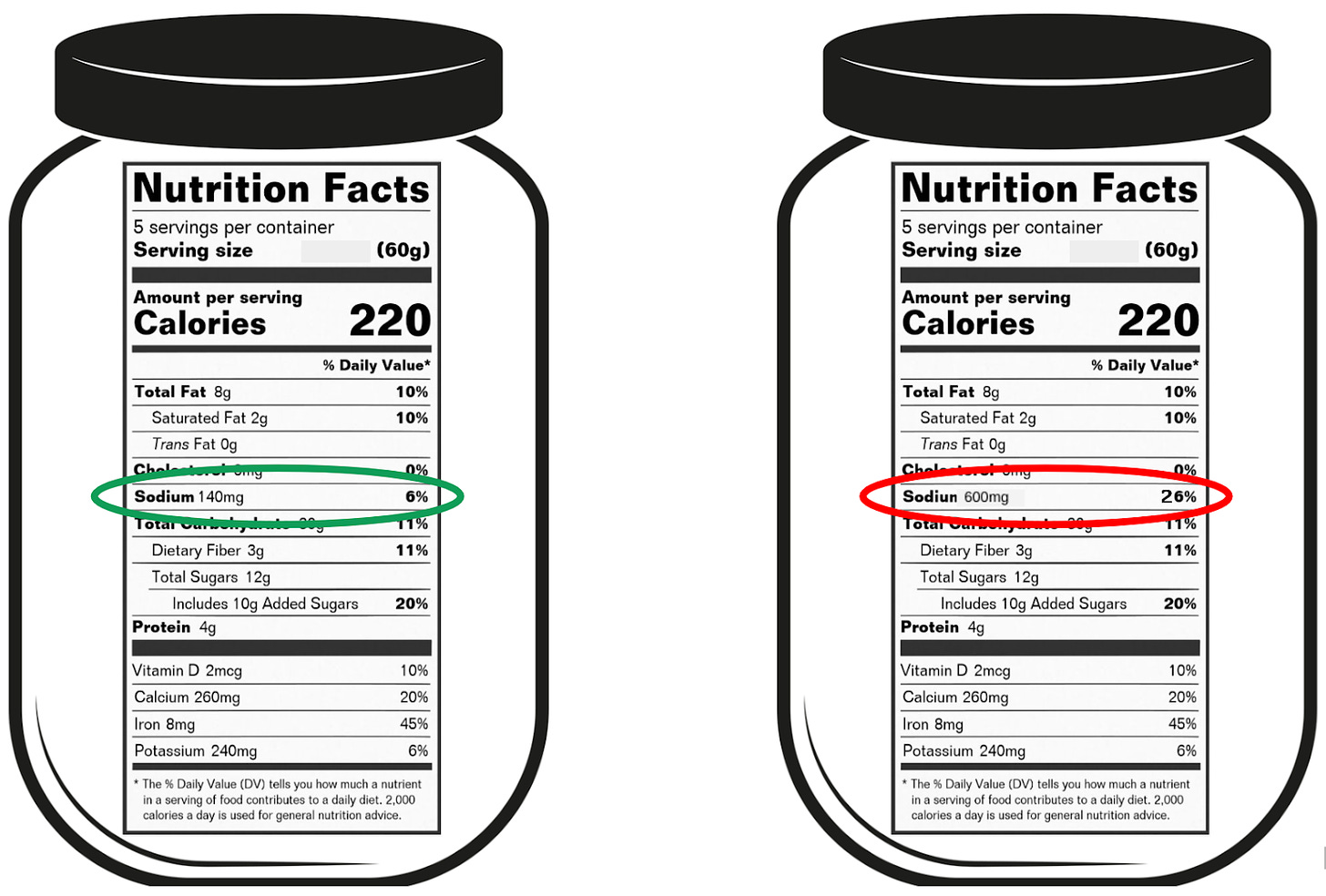

For another example of why mandatory levels are unnecessary but transparency necessary, let’s take a look at food ingredients. Imagine you’re sensitive to sodium intake and are at the grocery looking for spaghetti sauce. You’ve narrowed your search to two popular brands. But you want to make a selection that helps minimize sodium consumption. One product has 140 mg of sodium per serving and the other has 600 mg per serving:

The government has not mandated a maximum level of sodium, but the required transparency afforded by the ingredients labels enables a more informed, nutritious choice.

But now imagine if labels were voluntary, and the manufacturer of the product on the left has not published a label:

How is the consumer supposed to make a better decision here? Which of these products is healthier? Consumers may make an unhealthy decision, assuming that the labeled product has less sodium, when it actually has over four times more! The lack of a label in some products prevents a basis for comparison, rendering a voluntary labeling program ineffective and in some cases, causing more harm than good.

Does a security label mean a “product is secure?” Well, does having a food label mean that a product is “nutritious”? Does an EnergyGuide label mean that a TV is energy-efficient? Absolutes are generally not practical in product quality features - everything is relative - and it’s the same with security and privacy. The initial versions of national labels are intended to provide transparency around baseline best practices that can be used for product comparison. They help ensure that truly bad choices (nutrition, energy, digital safety) can be avoided by consumers, but they do not ensure that a product is meeting the best possible requirements across all relevant criteria. For digital security, a label asserting the product meets all relevant baseline requirements will not mean that the product cannot be compromised, especially by sophisticated attackers. But it does mean that consumers can make informed decisions to better protect themselves and their digital lives. For example, if a product offers a minimum commitment to security updates for five years from purchase, the consumer can select this product over another product that offers a minimum of two years support or no commitment at all.

When considering regulation, many stakeholders (e.g. industry groups, small businesses, regulators) worry that imposing many new requirements may add undue burden to developers and limit innovation. And that is exactly why the mandate should not focus on meeting a specific set of requirements or levels but rather on transparency of how each of the requirements are met. This drives the aforementioned virtuous cycle of product improvement and better outcomes for society.

Examples of platform-mandated digital safety labels

The economic demand for better digital safety practices can be driven by regulation, but also by retailers (e.g. the earlier Best Buy example) and platform providers.

The Android platform currently defines three levels of biometric strength and mandates that manufacturers be transparent about the level met by each biometric of every device. We’re working with GSM Association to standardize how this is surfaced to users in a digital label.

Another example are Android app security certifications, highlighted in Google Play’s app safety labels. To the best of my knowledge, Google Play’s security certification information is the first consumer-facing platform/ecosystem security label in the history of the IoT. In addition to the security aspect, Google Play safety labels help consumers understand what and why data is collected from apps so they can make better decisions to protect their privacy. While Google does not mandate specific security or privacy requirements or levels within the label, transparency - publication of the label in the app store listing - has been mandated for all apps published starting July 2022, enabling users to make more informed decisions about the digital health for the apps they choose to install. This is also a great example of the importance of providing both security and privacy transparency ingredients labels to the IoT, even though most standards today are focusing first on security.

In addition to these transparency mandates for developers on our platforms, we have long made public commitments around transparency for our own smart home IoT products.

A framework for healthy regulation through transparency

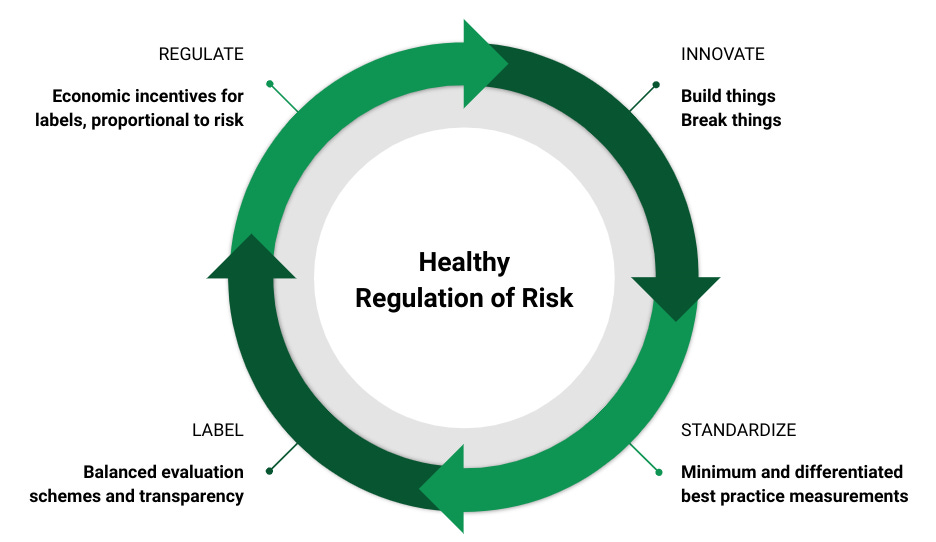

Best practices in digital security and privacy have been developed over the past several decades. The common sense requirements around security patching, encryption, authentication, vulnerability reporting and response, etc. are well understood by security and privacy practitioners, even if they are not uniformly followed by developers. Defenses have been innovated as a response to threats and pressure tested over time by hostile activity as well as internal red teams. That is why the process for standardizing common sense baseline security requirements for connected products, including the aforementioned ETSI standard as well as similar ISO and NIST standards, has been relatively straightforward. Once you have reasonably good standards, we can set up scalable evaluation and monitoring schemes to produce the information that explains performance against the standards. Finally, regulators can reference these independent schemes to bring mandated transparency - through online labels - to consumers. Over time, as innovation drives new threats and defenses, this healthy cycle of innovation and proportional regulation continues:

We’re on the cusp of bringing impactful baseline security labels to connected products. But it took us over three decades to get here.

So what’s next after these initial security labels?

Security and privacy go hand in hand, and the minimum baseline standards need to incorporate common sense baselines for privacy as well, including transparency for sensors and data collection (similar to the aforementioned Google Play safety labels, but applied to all connected products), common sense controls around consent and data deletion, etc. I introduced this as a new working group in the Connectivity Standards Alliance, and progress is going well, so the future is bright there.

We also need to expand beyond the current baseline standards to enable not just the minimum but differentiated requirements that help the community understand what great security and privacy look like and to handle important requirements in specific product categories. For example, the current ETSI, ISO, NIST baseline standards and national labeling proposals do not cover the security strength of a biometric used for authentication, and for some types of devices (not just phones), the quality of the biometric is important, and transparency is needed. Our work to incorporate biometrics into the GSMA’s scheme for mobile device security is in progress, so the future is bright there as well. There are many examples of product-specific security and privacy requirements that need to be tailored to product classes. Another simple example are transparency requirements for VPNs, such as the lack of logging of network data and physical location of the business and its termination servers.

It’s also worth pointing out that the current labeling efforts are focused on minimum baseline security capabilities but do not yet attempt to ascertain the level of assurance that the product can withstand any particular attack potential. Perhaps the product’s security controls can resist a relatively unsophisticated attacker, but what about a more well resourced attacker, such as organized crime or state-sponsored group? This blog started with a retrospective on high assurance evaluation, and this remains an important goal for the most critical systems upon which digital society depends. A connected autonomous vehicle transporting millions of people should be required to meet a more stringent level of assurance. Or put another way, attackers should have to spend a much higher amount to exploit a life-critical system than a refrigerator with very low market share. We know how to solve this challenge too, but to be successful, we need to land the minimum baseline requirements first so that consumers become accustomed to the concept of a digital safety label and regulators (and others) gain confidence that such systems can be developed and scaled appropriately.

We can follow a similar framework for other areas of digital safety. For example, public platforms like social networks, video, app stores, etc. all have user-generated content for which we’ve seen plenty of building and breaking innovation, but we currently lack international standards for content safety and international schemes for measuring performance against those standards and labeling content accordingly. Thus, we do not have a well-lit path for effective regulation. Hopefully the progress we’re making in digital security and privacy labels will provide an impetus for tackling content safety and moderation in a similar way.

Responsible AI, and specifically, AI safety, is another example where we need an accelerated path around the innovation-standardization-labeling-regulation cycle. We don’t have three decades to spare, we may not even have three years: rapidly evolving generative AI capabilities are democratizing the power to launch global-scale attacks. Rapid innovation (e.g. prompt injection attacks vs. prompt injection defenses) is happening, and we need the standardization, monitoring, and proportional transparency, focused initially on explaining how models, training data, model inputs, and model outputs are protected, to move far more rapidly than we’ve ever seen this cycle run. At Google, we’re working hard on ML safety and helping standards bodies and regulators navigate the way forward. Perhaps a good topic to dive deeper on in a future blog…

Finally, if you’ve gotten to the end of this (long) blog, thank you for your interest, I hope it was useful. You can listen to another summary of some of the issues in labeling in a recent lawfare podcast I did along with Tatyana Bolton, Google’s fantastic public policy lead for connected product security.